- Logstash listening to filebeats for different log type how to#

- Logstash listening to filebeats for different log type install#

Logstash listening to filebeats for different log type install#

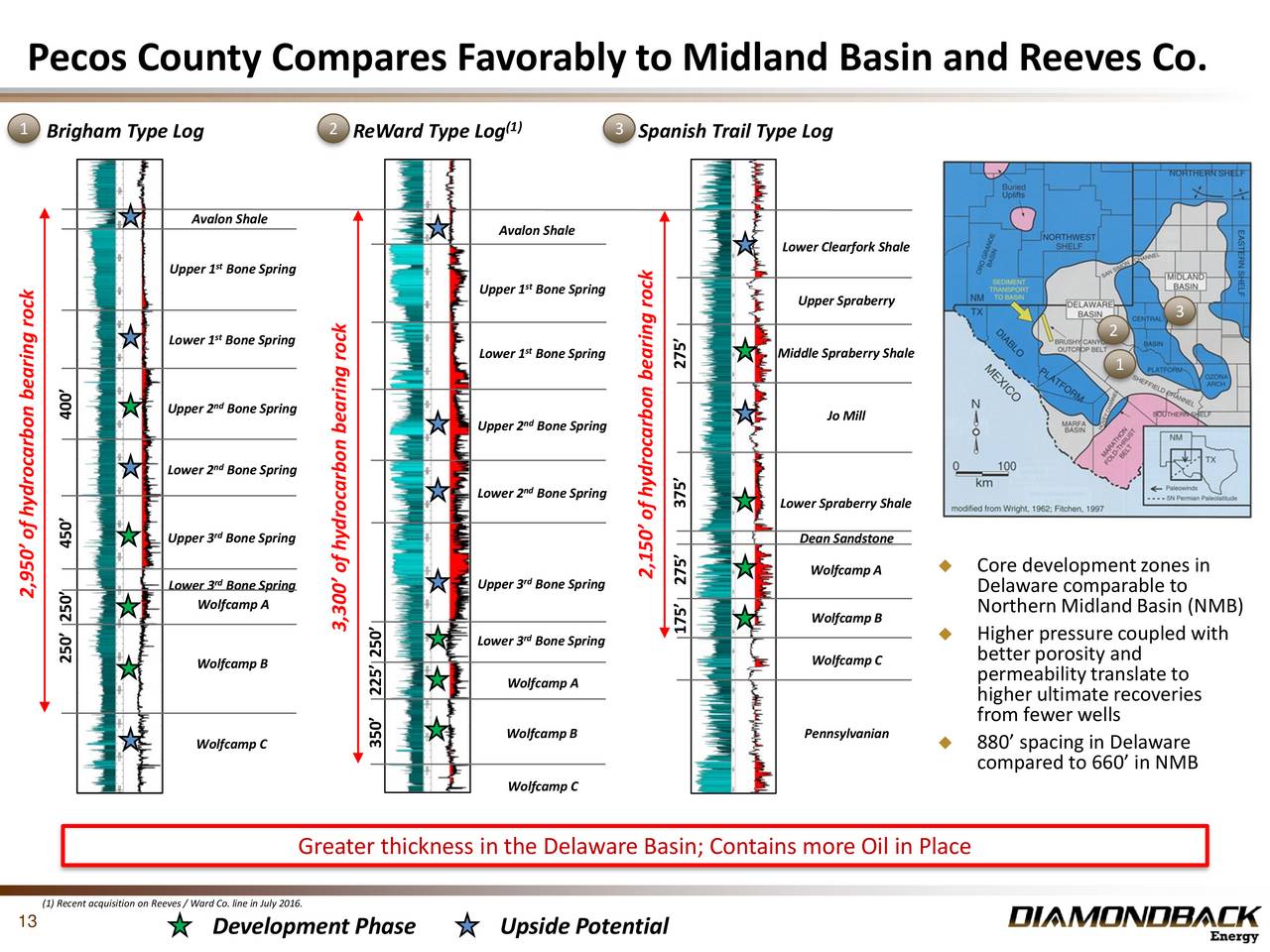

2、 Collect logs through filebeat to logstash and then send them to esįirst, install logstash. Some preprocessing can be done through logstash, and other data storage other than es can be collected through logstash. This method of directly connecting es acquisition logs through filebeat is simple and direct, but it is not flexible enough to preprocess and operate the collected logs.Ī layer of logstash can be added between filebeat and es to decouple filebeat from ES. Through filebeat – * filtering in kibana, you can see the index of filebeat and the data collected through filebeat. You can see access in nginx Log and error The log of log has been up. View the log information in the ES index through the elastic search head plug-in filebeat -e -c filebeat.yml -d “publish” If a single machine has only one node, it can be equipped with only one IP and port. Output to es and configure your es service address in hosts. Kibana can be configured if you need to display friendly in kibana # Paths that should be crawled and fetched. # Change to true to enable this input configuration. Locate in the installation directory of filebeat YML configuration obtains the path of log file and the configuration output to es. 1、 Directly collect logs to es through filebeat

Generally speaking, there is logstash on the collection server, while nginx and filebeat should be installed on the collection target. In this example, elasitcsearch is a cluster composed of three nodes. Access Log and error Log access log and error log. Generally speaking, after nginx is installed by default, the log file is in / usr / local / nginx / logs directory. The log output storage mode can be easily configured through logstash. Of course, as for the log collection, save it to see your needs.

Logstash listening to filebeats for different log type how to#

Therefore, this article introduces how to collect logs from nginx to es. This paper introduces how to collect nginx access logs and error logs through filebeat, logstash and rsyslog through several examples.Īs we all know, elk technology stack is a sharp tool for collecting and analyzing logs. How to effectively and conveniently collect nginx logs for effective analysis has become a concern. The access log of nginx is one of the very im portant data sources for user behavior analysis and security analysis. This Index is created because of line # 15 of the l ogstash configuration file.Because of the powerful function and outstanding performance of nginx, more and more web applications use nginx as the web server of HTTP and reverse proxy. You can see a new index filebeat-7.6.1-2020.03.30 is created.Now go to Kibana ( -> Management -> Index pattern.Run Filebeat with configuration created earlier.Line # 8 and 9 are required to each log span more than one line.Line # 7 specifies the pattern of log file to identify the start of each log.You can add more log file similar to line # 5 to poll using same filebeat.Line # 5 specifies the log file to poll.Create a Filebeat configuration file as shown below.Run log stash with configuration created earlier.Line # 15 specifies the port Elasticsearch server where logstash forward the data.Line # 5 specifies the port logstash will listen.Create a logstash configuration file as shown below.Go to browser and open and if Elasticsearch is running fine you will get output like below.Install ELK and FileBeat on you local system.If in an Enterprise we have more than one server, then this is how typical ELK stack looks like:Īs Logstash is heavy on resources, we can use filebeat on different which pushes the data to logstash. Kibana can pull data on demand and create Graph/Chart/Reporting.Elasticsearch stores data in persistent store with Indexing.Logstash gets data filter, processes it and sends it to Elasticsearch.File Beat pools the file and sends the data to Logstash.In general, the ELK architecture looks as shown in the image: There are three main reasons we need ELK:ĮLK and File Beat can be downloaded from the locations below: Log aggregation and efficient searching.Kibana lets users visualize data with charts and graphs in Elasticsearch.Logstash is a server-side data processing pipeline that ingests data from multiple sources simultaneously, transforms it, and then sends it to a “stash” like Elasticsearch.Elasticsearch is an open source, full-text search and analysis engine, based on the Apache Lucene search engine.Elasticsearch is a search and analytics engine.ELK is the acronym for three open source projects: Elasticsearch, Logstash, and Kibana.